Abstract & Project Description

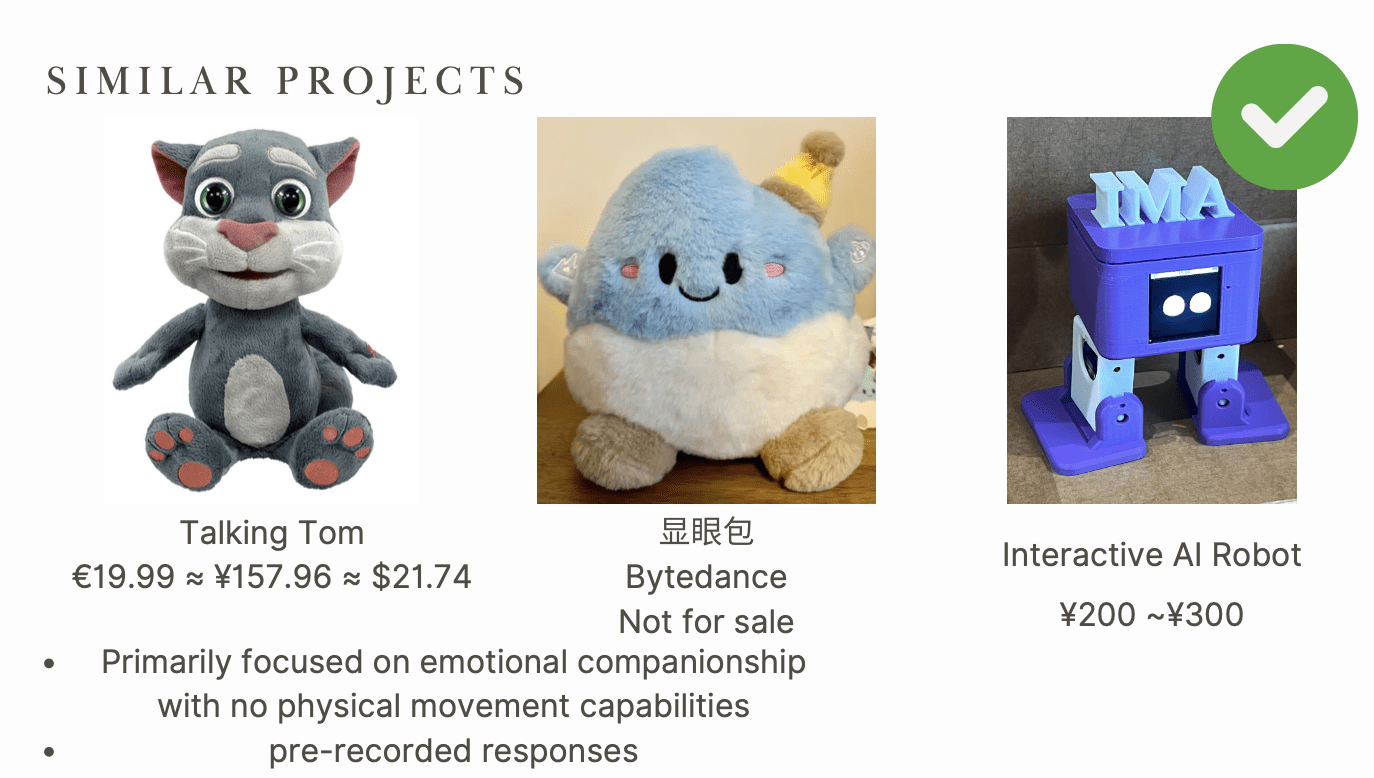

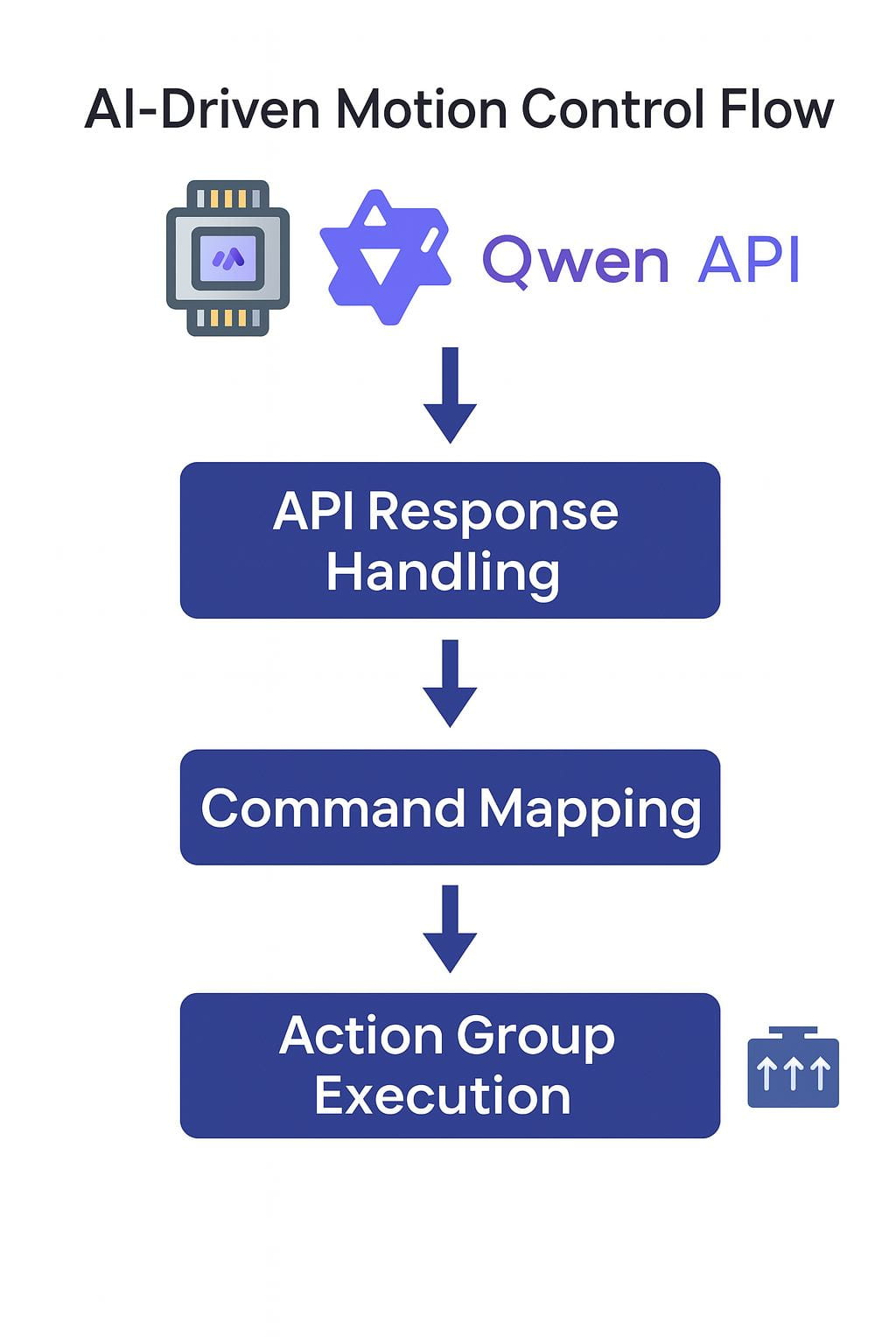

The Interactive AI Robot is a smart plush toy enhanced with real-time voice interaction and basic motion capabilities. Unlike traditional toys that rely on pre-programmed responses, this robot utilizes an ESP32 microcontroller combined with the Qwen API to perform dynamic speech generation and real-time audio processing. It aims to simulate natural human-robot interaction by providing unscripted verbal replies and expressive physical feedback.

This project explores the potential of combining natural language processing with physical motion to deliver a more engaging and emotional user experience. By transforming passive responses into active conversations and motions, the robot bridges the gap between artificial intelligence and emotional companionship in toys.